Q-learning Update Rule: A Step-By-Step Explanation

Table of Contents

- Introduction to Q-Learning

- Understanding the Q-Learning Update Rule

- Key Components of the Q-Learning Algorithm

- The Role of Policy and Actions in Learning

- Deep Q-Learning: An Advanced Approach

- Comparison: Q-Learning vs. Other Reinforcement Learning Techniques

- How to Implement the Q-Learning Update Rule: A Step-by-Step Guide

- Frequently Asked Questions about Q-Learning

Introduction to Q-Learning

Q-learning is a type of reinforcement learning that helps machines learn from their actions. It allows an agent to learn how to choose the best actions to maximize rewards in any environment. This is significant in AI because it enables systems to improve their performance without explicit programming for every situation.

Reinforcement learning (RL) involves three main components: an agent, an environment, and rewards. The agent learns by taking actions in the environment. The environment provides feedback in the form of rewards, letting the agent know if its action was good or bad. The learning process continues as the agent experiments with different actions.

In the Q-learning framework, the agent uses something known as a Q-table. This table stores values representing the expected rewards for taking specific actions in various states (Source: GeeksforGeeks). Over time, as the agent interacts with the environment, it updates this table based on the feedback it receives, helping it find the best action in each situation.

Understanding how agents and environments work is crucial. The agent receives different states from the environment and must make decisions that lead to rewards. Its goal is to learn the q-learning update rule, which refines its Q-values, getting closer to what it believes the maximum rewards could be in future scenarios (Source: Wikipedia).

In summary, Q-learning equips agents to navigate their environments intelligently, maximizing rewards through experience. The simplicity and effectiveness of this method make it a powerful tool in AI development.

Key takeaway: Q-learning empowers agents to learn optimal strategies by interacting with their environments and adjusting actions based on received rewards.

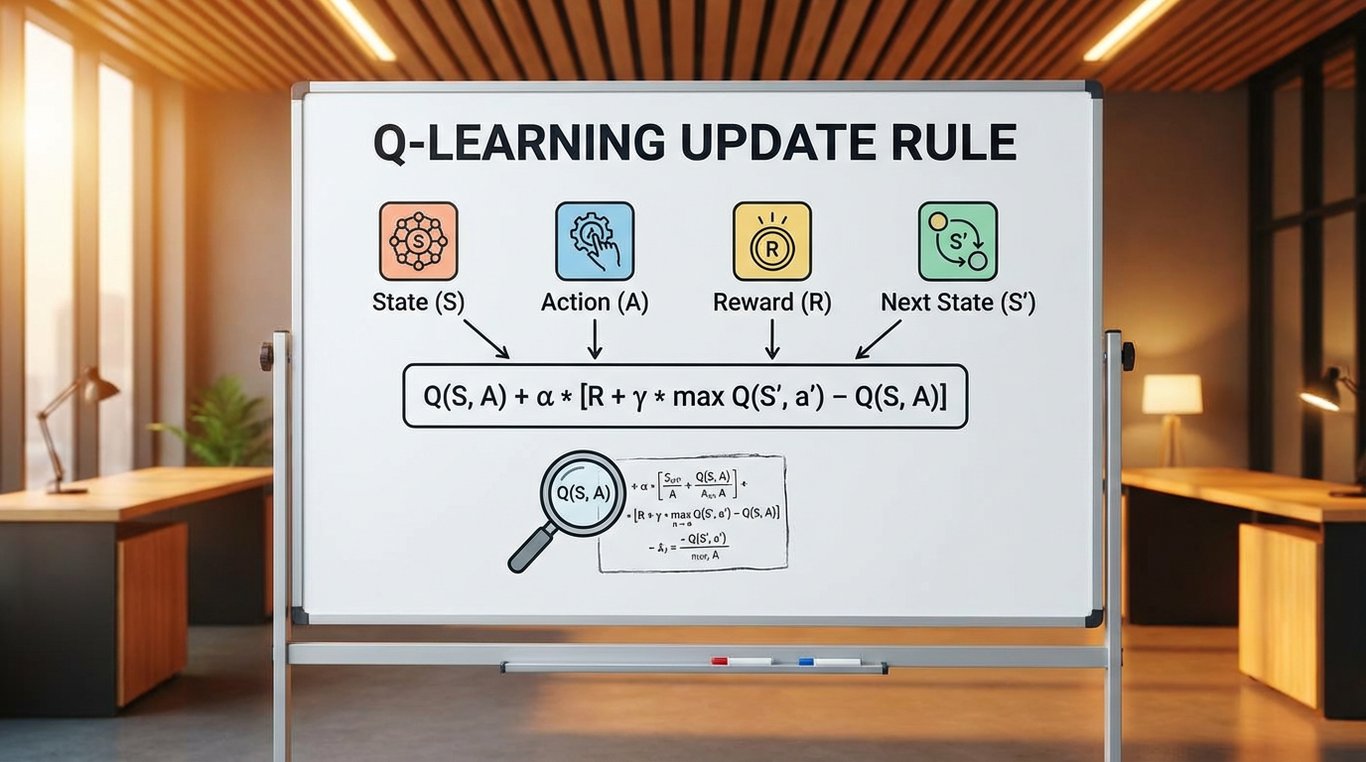

Understanding the Q-Learning Update Rule

The Q-value function is a key element in Q-learning. It estimates how well a specific action will perform in a given state.

The purpose of the Q-learning update rule is to improve these Q-values. It allows the agent to learn better actions over time.

The Q-learning update formula is expressed as: [ Q(s, a) \leftarrow Q(s, a) + \alpha \times \left( r + \gamma \times \max_{a'} Q(s', a') - Q(s, a) \right) ] Here, ( Q(s, a) ) represents the current Q-value, while ( \alpha ) is the learning rate.

Components of the Formula

In this formula, each part has a role. The learning rate ( \alpha ) determines how quickly the agent adapts its Q-values.

Rewards play a vital role in updating the Q-values. The immediate reward ( r ) shows how valuable an action was in a specific state.

The max future reward ( \max_{a'} Q(s', a') ) guides learning towards better actions. It reflects the best estimated Q-value for the next state.

Actions and State Transitions

Actions affect state transitions, which leads to new experiences. When an agent takes an action in a state, it moves to another state.

Q-learning encourages exploration of different actions. Agents can choose from various actions, helping them discover new rewards.

Updating Q-values helps agents build knowledge over time. By continuously applying the update rule, agents refine their strategies and improve performance.

An optimal policy emerges from consistent updates. This process ensures the agent learns the best actions for achieving maximum rewards in various states.

To summarize, the Q-learning update rule is essential for developing effective strategies in reinforcement learning. It helps agents learn valuable actions based on rewards and state transitions.

Key Components of the Q-Learning Algorithm

Understanding the key components of the Q-learning algorithm helps clarify how an agent learns effectively. This learning process involves states, actions, rewards, and a crucial tool called the Q-table. Each of these elements plays a vital role in how the algorithm functions.

First, states and actions form the backbone of learning. A state represents a specific situation the agent finds itself in, while an action is what the agent can do in that state. For example, if the agent is learning to navigate a maze, each position in the maze is a state, and moving left, right, up, or down are the possible actions. The agent uses these actions to explore its environment and gather information that helps update its knowledge over time.

The Role of the Discount Factor

The discount factor significantly impacts how rewards are calculated. This value, typically between 0 and 1, decides the importance of future rewards compared to immediate ones. A higher discount factor means the agent values future rewards more. For instance, if the discount factor is 0.9, the agent will consider future rewards almost as valuable as immediate rewards. This encourages the agent to seek long-term benefits rather than short-term gains (Source: GeeksforGeeks).

What is a Q-Table?

A Q-table is a central tool within the Q-learning algorithm. It stores Q-values, which represent the expected rewards for taking specific actions in particular states. Initially, all Q-values can be set to zero. As the agent explores different states and actions, it updates these values using the q-learning update rule. This iterative process allows the agent to learn the best actions to take over time (Source: Wikipedia).

In summary, understanding states, actions, the discount factor, and the Q-table illuminates how the q-learning update rule helps an agent learn. These elements work together to create a robust learning process, guiding the agent toward better choices.

The Role of Policy and Actions in Learning

TL;DR: A policy defines an agent's actions in Q-learning, influencing their learning outcomes. The right policy can lead to better rewards.

In Q-learning, a policy is a strategy that guides an agent's behavior. It tells the agent which action to take in a given state. For example, if an agent finds itself in a maze, the policy will help it decide whether to turn left, right, or go straight. This decision-making process is crucial for effective learning and is central to the q-learning update rule.

Different policies can lead to different learning outcomes. A greedy policy, which always picks the action with the highest reward, might quickly get stuck on a local maximum, missing better opportunities. In contrast, an exploratory policy encourages the agent to try new actions, which can lead to discovering hidden rewards. For instance, if the agent in our maze occasionally chooses to go the wrong way, it might find a shortcut that the greedy approach would overlook.

Actions and Rewards Connection

The relationship between actions and rewards is key in shaping policies. When an agent takes an action and receives a reward, it learns from that experience. For instance, if an agent receives a high reward for solving a puzzle, it will likely repeat the actions that led to that achievement. This cycle builds the agent's Q-values, which represent the expected rewards for taking specific actions in certain states. The more defined and effective the policy, the better the actions and rewards align.

An effective policy encourages exploration and exploitation, allowing the agent to balance trying new things while leveraging known rewards. This balance is essential for improving the agent's performance and efficiency over time.

The takeaway is that a well-structured policy is vital for guiding appropriate actions that maximize rewards in the learning process.

Deep Q-Learning: An Advanced Approach

Deep Q-learning builds upon traditional Q-learning by integrating deep learning methods. This evolution allows agents to make better decisions in complex environments.

How deep learning enhances Q-learning

Deep learning uses neural networks to approximate the Q-value function. This is crucial when the state or action space is large. In simpler terms, a neural network helps the agent understand better how to act from its current state. Traditional Q-learning struggles with high-dimensional inputs, like images or complex game scenarios.

Benefits of using neural networks in Q-learning

Using neural networks provides several significant advantages:

- Scalability: Neural networks can handle vast amounts of data, enabling agents to learn from experiences in dynamic environments.

- Feature Extraction: They automatically identify important features in the input data, reducing the need for manual feature design.

- Improved Performance: Experiments show that Deep Q-learning outperforms traditional methods. For example, the DQN (Deep Q-Network) model successfully learned to play Atari games, achieving human-level performance in several cases.

Use cases for deep Q-learning in complex environments

Deep Q-learning is useful in various fields, including:

- Robotics: In robotic navigation, agents can adapt to new terrains and obstacles using deep Q-learning methods.

- Gaming: This approach has been used in popular games like Dota 2 and StarCraft II, where agents learn to make strategic decisions.

- Finance: Traders utilize deep Q-learning to develop strategies based on historical data and market trends, improving investment outcomes.

The deep Q-learning framework expands the capabilities of the traditional algorithm, allowing agents to effectively navigate intricate states and make rewarding choices. This advancement is vital for building AI systems that learn and adapt in real-time.

Quotable Takeaway: Deep Q-learning transforms the Q-learning update rule into a powerful tool for learning in complex environments.

Comparison: Q-Learning vs. Other Reinforcement Learning Techniques

| Feature | Q-Learning | Policy Gradient Methods | Model-Free Approaches | Model-Based Approaches |

|---|---|---|---|---|

| Learning Method | Off-policy | On-policy | Does not require a model | Requires a model |

| Value Allocation | Q-table | Directly parameterizes policy | Simplifies computation | Needs more computations |

| Optimality Guarantee | Yes, under certain conditions | No guarantee | Varies | Depends on model accuracy |

| Exploration Strategy | Balances between exploitation and exploration | Tends to explore more | Varies | Generally less exploratory |

| Convergence Speed | Generally slower | Often faster | Fast convergence is possible | Can be both slow or fast |

| Situations Preferred | Discrete action spaces | Continuous action spaces | Straightforward tasks | Complex and dynamic domains |

| Bottom Line | Effective for many scenarios, particularly where rewards need meticulous tracking. |

Comparing Approaches

Q-learning and Policy Gradient methods differ mainly in how they learn. While Q-learning uses a Q-table to store action values for different states, policy gradients directly optimize the policy itself. This means Q-learning generally requires more computational effort but provides clear value estimations. It's ideal for simpler environments with defined state-action pairs.

When discussing model-free vs. model-based methods, Q-learning is model-free, meaning it doesn't need a model of the environment. This allows it to adapt well to environments where transitions and rewards are uncertain. In contrast, model-based approaches require knowledge of how states change and how rewards are distributed. These approaches can be effective in more complex scenarios, but they rely on accurate modeling.

Why Choose Q-Learning?

Q-learning is often preferred in situations with discrete action spaces and when the rewards are delayed or complex. Its ability to handle stochastic transitions makes it useful in many real-world applications. For example, industries like finance or gaming can benefit greatly from Q-learning's systematic approach to learning.

In summary, while Q-learning is powerful, each method has its strengths and weaknesses. Choose based on your specific needs and the environment's nature. As you explore reinforcement learning, considering these factors will guide you to the right choice.

How to Implement the Q-Learning Update Rule: A Step-by-Step Guide

Implementing the Q-learning update rule can seem daunting, but following these straightforward steps can make it manageable. Here’s how to get started:

Set Up Your Environment: Start by choosing a suitable environment for testing your Q-learning algorithm. Popular options include Gridworld or OpenAI’s Gym. These environments provide various states and actions for your agent to explore.

Define the States: Clearly outline the states your agent will encounter. In a Gridworld, for example, each cell represents a state. If your environment has 16 cells, then you have 16 states.

Initialize the Q-Table: Create a Q-table with rows for each state and columns for each action. Initially, set all Q-values to zero, encouraging your agent to explore.

Choose an Action Policy: Implement an action policy, like the epsilon-greedy strategy. This means your agent will explore a new action 10% of the time to discover the best path to rewards (Source: A Beginner’s Guide to Q-Learning).

Update the Q-Values: Use the Q-learning formula to update the Q-values based on actions taken and rewards received. For each state-action pair, calculate the new Q-value:

[ Q(s,a) = Q(s,a) + \alpha \times (r + \gamma \max Q(s',a') - Q(s,a)) ]Set Learning Parameters: Adjust the learning rate (α) and discount factor (γ). A common choice for γ is 0.9, balancing immediate and future rewards.

Common Pitfalls to Avoid

Failing to Explore: One of the biggest mistakes is not allowing enough exploration. Ensure your epsilon value is sufficiently high at the start.

Ignoring State Representation: Make sure your states accurately represent the environment. Misrepresentation can lead to poor learning outcomes.

Overfitting to Noise: Avoid letting your agent overfit by limiting the number of updates in a noisy environment. Monitor performance regularly.

Following these steps can help you successfully implement the q-learning update rule. Each element aligns your agent’s learning process, leading to more effective decision-making over time. A clear plan and avoidance of common mistakes will enhance your learning experience.

Frequently Asked Questions about Q-Learning

What is the significance of the discount factor in Q-learning?

The discount factor is crucial in Q-learning because it balances immediate and future rewards. A discount factor, represented by gamma (γ), ranges between 0 and 1. If γ is close to 1, the agent values future rewards almost as much as immediate ones. A lower γ means the agent prioritizes immediate rewards. Adjusting this factor helps the agent learn how to make decisions effectively in different environments. It directly influences the agent's strategy and its learning efficiency over time.

How does Q-learning differ from other reinforcement learning algorithms?

Q-learning is distinct because it is a model-free algorithm. This means it doesn't require a model of the environment to learn. Other algorithms, like policy-gradient methods, often rely more on the probabilities of actions. Q-learning, on the other hand, focuses on learning the value of actions in each state, which is reflected in the Q-table. This makes Q-learning especially useful for problems with discrete action spaces where the agent can evaluate and adjust its strategy based on past experiences.

Can Q-learning be used for continuous action spaces?

Q-learning is typically designed for discrete action spaces. However, it can be adapted for continuous action spaces with advanced techniques. One such method involves combining Q-learning with function approximation, like deep learning, to estimate Q-values for continuous actions. This adaptation allows agents to operate in environments where they must take a wide range of actions, making Q-learning more versatile in real-world applications such as robotics and autonomous driving.

What are the common applications of Q-learning in real-world scenarios?

Q-learning is widely used in various applications, including game playing, robotics, and finance. For example, it powers game AI in video games, enabling characters to adapt their strategies. In robotics, Q-learning helps robots learn tasks through trial and error. In finance, it can optimize trading strategies by learning the best actions based on market conditions. This adaptability makes it a valuable tool across many sectors, delivering smart and efficient solutions.

How can I visualize the Q-table when implementing Q-learning?

Visualizing the Q-table can be done using heatmaps or 3D surface plots. These visualizations help illustrate the values associated with different state-action pairs. Tools like Matplotlib in Python make it easy to create these graphics. By plotting Q-values, you can see how the agent's understanding of the environment evolves over time. This insight can guide adjustments to the learning process and improve outcomes.

Use these insights to effectively implement the q-learning update rule in your projects.